The Enterprise Marketing AI Stack

Most conversations about AI in marketing start in the wrong place. They start with the model: which LLM is fastest, which tool generates the best first draft, whether GPT or Claude or Gemini produces better subject lines. These are interesting questions. They are also largely irrelevant to whether AI actually improves your marketing operations.

The reason is straightforward. The same AI model, given the same prompt, produces dramatically different output depending on what it knows about your brand, your audience, and what has worked before. A subject line generator operating from a blank prompt produces generic options. The same generator, connected to six months of open rate data, your brand voice rules, and your team's correction patterns, produces something your audience actually recognizes.

The difference isn't the model. It's the stack surrounding it.

That's the problem the enterprise marketing AI stack solves. Not which AI tool to buy, but how to architect the layers underneath it so that any AI tool produces output worth using.

Boston Consulting Group's 2025 research puts a sharp number on the gap: only 5% of companies have achieved AI value at scale. Another 35% are scaling and beginning to see returns. The remaining 60% report minimal value despite substantial investment. The top 5% achieve five times the revenue increases and three times the cost reductions of everyone else. The question is what separates them.

It isn't the model or the budget. It's the architecture. The companies generating real returns from AI have built the structural layers that give AI something meaningful to work with. The companies stuck in pilot mode bought the intelligence layer without building the foundation underneath it.

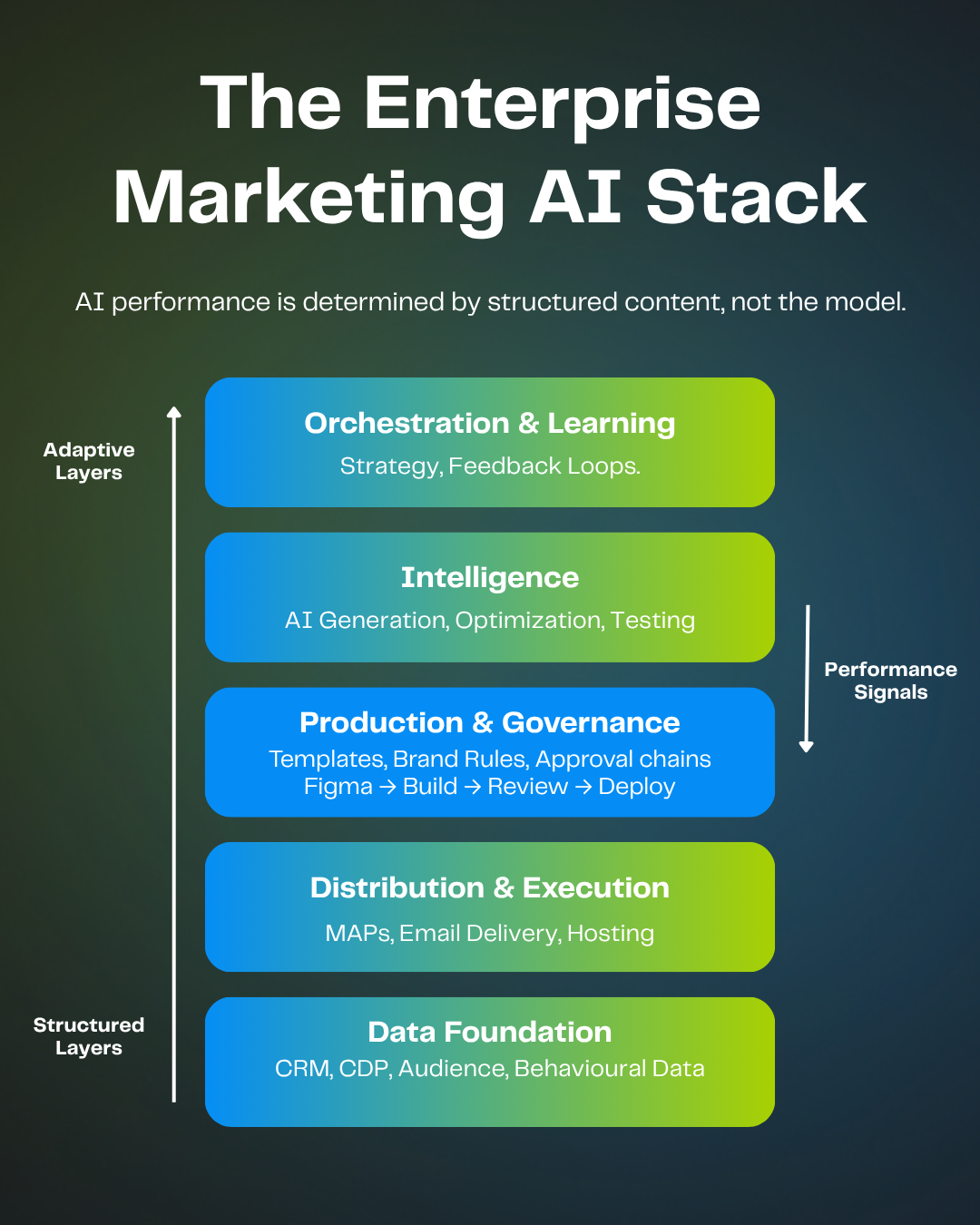

The five layers of the enterprise marketing AI stack

The stack reads from bottom to top. Each layer inherits the quality of the layer below it. Data quality determines distribution effectiveness. Distribution capability shapes what the production layer can deploy. Production constraints determine whether the intelligence layer produces trustworthy output. And orchestration makes the whole system compound rather than plateau.

The bottom three layers are structural. They provide the governed, reliable foundation that doesn't change based on which AI model is trending this quarter. The top two layers are adaptive. They're where AI and human judgment operate, adjusting based on what the structured layers provide.

The critical insight: the boundary between structural and adaptive sits right below the intelligence layer. Everything below that boundary needs to be solid before AI adds value. Everything above it benefits from AI but requires human judgment to direct it. Teams that invest heavily in the intelligence layer without building the structural layers below it are building on sand.

Layer 1: Data foundation

What lives here: CRM platforms, customer data platforms, audience segmentation engines, behavioral data, first-party data infrastructure.

Example tools: Salesforce Data Cloud, Adobe Real-Time CDP, Segment, Snowflake, mParticle, Tealium.

What it provides to the stack: Who you're talking to and what you know about them.

Every layer above this one inherits the quality of your data foundation. An AI system generating personalized email campaigns with incomplete CRM data, outdated segmentation, or disconnected behavioral signals produces output that looks sophisticated and performs poorly. The model doesn't know the data is bad. It optimizes for whatever you give it.

IBM's Institute for Business Value found that over a quarter of organizations estimate they lose more than $5 million annually due to poor data quality, with 45% of business leaders citing data accuracy as a leading barrier to scaling AI. That finding holds in marketing specifically: 54% of marketing ops professionals cite poor data quality and systems integration as their primary barrier to adopting new marketing technology. The data layer isn't a prerequisite that you address once and move past. It's an active constraint on every layer above it.

The organizations getting the strongest returns from AI tend to be the ones that invested in data infrastructure before AI became fashionable, not because they anticipated the need, but because clean data makes everything work better regardless of which tools sit on top.

The data foundation also determines the ceiling for personalization. AI-driven email personalization delivers a 41% increase in revenue when it has quality audience data to work with. Without it, personalization is shallow: first-name merge tags and basic segmentation that doesn't move metrics.

Layer 2: Distribution and execution

What lives here: Marketing automation platforms, email delivery infrastructure, landing page hosting, SMS and push notification systems.

Example tools: Adobe Marketo Engage, Salesforce Marketing Cloud, HubSpot, Oracle Eloqua, Iterable, Braze.

What it provides to the stack: Where campaigns go and how they reach audiences.

Marketing automation platforms were designed for orchestration and delivery. They manage audience segments, trigger automated journeys, handle send-time logic, and route campaigns across channels. They do this well. What they were not designed for is asset production.

The distinction matters because most enterprise teams started with their MAP and tried to use it for everything. Building emails inside Marketo or SFMC works until you have 50 people across six countries who need to produce branded content without breaking templates or requiring developer support. The native editors in most MAPs were built for the technical user who understands the platform, not for the marketer who needs to build a campaign by Thursday.

This is the architectural insight that explains why the production layer exists as a separate stack component. The distribution layer doesn't get better at production by adding features. It gets better at distribution by connecting to a dedicated production layer that handles the creation problem independently.

When this layer works well, it's invisible. Emails deploy on schedule, landing pages render correctly, and journeys trigger based on behavior. When it doesn't, the symptoms surface everywhere: broken rendering across email clients, failed personalization tokens, deployment bottlenecks that add days to every campaign cycle. In 2023, 62% of email teams required two or more weeks to produce a single email. Most of that time was spent navigating the gap between production and distribution, not doing creative work.

Layer 3: Production and governance

What lives here: Asset production platforms, brand template systems, approval workflows, design-to-deployment pipelines, collaborative review tools.

Example tools: Knak, Figma, Adobe Workfront, Bynder, Aprimo, Frontify.

What it provides to the stack: The structured environment that makes everything above it trustworthy.

This is the layer that determines whether AI produces generic output or something your brand actually claims. It is also the layer most enterprise stacks either don't have or have cobbled together from disconnected tools.

The production layer solves a specific architectural problem: how do you let dozens or hundreds of marketers build branded campaigns without either locking them into rigid templates that kill creativity or giving them open-ended tools that produce inconsistent results? The answer is tiered governance. Routine production flows freely within brand-controlled constraints. Exceptions, new formats, and high-stakes campaigns get human review. The system enforces the rules that shouldn't vary (brand colors, logo placement, legal footer requirements) while leaving room for the decisions that should (layout, messaging emphasis, visual hierarchy).

The Figma-to-MAP pipeline illustrates how this works in practice. Designers create in Figma, the tool they already know and prefer. One-click push to Knak preserves the design intent. Marketers add personalization, dynamic content, and approval routing. The finished asset syncs to the marketing automation platform without manual export, HTML coding, or developer involvement. What used to be a multi-day handoff between design, development, and marketing becomes a connected workflow where each role contributes without waiting for the others.

This matters for AI because of what the production layer contains: brand voice rules encoded as constraints, template performance history, approval patterns that document what gets sent back for revision and why, rendering data across email clients, and the accumulated corrections of hundreds of campaign cycles. When the intelligence layer has access to all of this, it produces output that reflects your brand. When it doesn't, it produces output that could belong to anyone.

Only 25% of marketing operations teams regularly use dedicated email and landing page production tools. The other 75% are building directly in their MAP, in general-purpose design tools, or through agency relationships. Each of those approaches works at small scale. None of them produces the structured data that makes the intelligence layer effective, because none of them captures the corrections, performance signals, and governance patterns that an AI system needs to improve over time.

The creation gap sits between what your team designs and what actually deploys. Close it with an ad hoc process and you get inconsistency. Close it with a governed production system and you get a foundation that makes every layer above it more effective.

Layer 4: Intelligence

What lives here: AI capabilities embedded within the production workflow. Subject line generation, first-draft creation, translation and localization, alt text for accessibility, image optimization, send-time prediction, content testing.

Example tools: Knak AI, Jasper, Writer, Movable Ink, Persado, Optimizely, Dynamic Yield.

What it provides to the stack: Speed and scale, but only as good as the context it receives from Layer 3.

Here is where most martech conversations start, and it's exactly the wrong place to start. The intelligence layer is the most visible part of the stack because it's where the output feels like it happens. AI generates a subject line. AI drafts an email. AI translates a campaign into eight languages. The output is impressive, and it arrives fast. 63% of marketers now use AI in their email marketing. Adoption isn't the problem. Context is.

Speed without context produces volume without quality. An AI that generates 50 subject line variants in 30 seconds saves time. An AI that generates 50 variants informed by which phrasing patterns drove opens for your specific audience over the last six months saves time and improves results. The difference is entirely determined by what Layer 3 provides.

McKinsey's State of AI research found that only 6% of organizations qualify as AI high performers who have moved past pilot stage and demonstrate meaningful business impact, even though 88% use AI regularly. The gap between those two numbers is the intelligence layer operating without a structured foundation. The 6% have connected AI to their production and governance infrastructure. The other 82% are using AI as a standalone tool, disconnected from the context that would make it genuinely useful.

IRIS Software Group demonstrated what happens when the intelligence layer connects properly to the production layer. After implementing AI-assisted email campaigns with structured brand context and performance feedback, their open rates reached 50%, a 72% improvement over the 29% baseline. Click-through rates hit 21%, up 62% from 13%. Those numbers didn't come from a better model. They came from AI operating within a governed system that provided the brand context, performance history, and structured data the model needed to produce output that actually resonated.

Without that structure, the default outcome is less promising: over 70% of marketers have encountered AI-related incidents including hallucinations, bias, or off-brand output.

The intelligence layer includes capabilities that range from near-full automation to heavy human involvement:

Capability | AI role | Human role | Where context matters |

|---|---|---|---|

Subject line generation | Generates variants from performance data | Selects, adjusts for campaign context | Historical open rates by segment |

Translation | Produces initial translation | Reviews for cultural nuance | Brand glossary, regional style guides |

First-draft creation | Generates draft from brief + brand rules | Edits for voice, strategy, accuracy | Template performance, brand voice rules |

Alt text | Generates from image analysis | Reviews for accuracy and brand alignment | Accessibility standards, brand terminology |

Send-time optimization | Analyzes engagement patterns | Sets parameters, reviews recommendations | Segment-level behavioral data |

Content testing | Runs dynamic variant testing | Defines test parameters, interprets results | Performance baselines, audience segments |

Capability | Subject line generation |

|---|---|

AI role | Generates variants from performance data |

Human role | Selects, adjusts for campaign context |

Where context matters | Historical open rates by segment |

Capability | Translation |

|---|---|

AI role | Produces initial translation |

Human role | Reviews for cultural nuance |

Where context matters | Brand glossary, regional style guides |

Capability | First-draft creation |

|---|---|

AI role | Generates draft from brief + brand rules |

Human role | Edits for voice, strategy, accuracy |

Where context matters | Template performance, brand voice rules |

Capability | Alt text |

|---|---|

AI role | Generates from image analysis |

Human role | Reviews for accuracy and brand alignment |

Where context matters | Accessibility standards, brand terminology |

Capability | Send-time optimization |

|---|---|

AI role | Analyzes engagement patterns |

Human role | Sets parameters, reviews recommendations |

Where context matters | Segment-level behavioral data |

Capability | Content testing |

|---|---|

AI role | Runs dynamic variant testing |

Human role | Defines test parameters, interprets results |

Where context matters | Performance baselines, audience segments |

The pattern across all of these: AI does the generation, human judgment guides the direction, and the production layer provides the context that makes the output worth reviewing rather than rewriting.

Layer 5: Orchestration and learning

What lives here: Human-in-the-loop strategy, feedback loops that connect campaign performance back to AI inputs, decisions about what to automate versus what to keep human, measurement and attribution.

Example tools: Tableau, Looker, Domo, Adobe Marketo Measure, 6sense Revenue AI.

What it provides to the stack: The learning that makes the whole system compound rather than plateau.

Without this layer, each layer below it operates in isolation and the stack stays static. With it, the stack gets smarter every quarter.

The orchestration layer is where performance data flows back down through the stack. Campaign results refine the intelligence layer's understanding of what works. Quality signals tighten governance in the production layer. Audience behavior data updates the segmentation models in the data foundation. Deployment performance metrics inform platform strategy at the distribution layer. The stack becomes a closed loop rather than a linear pipeline.

This is the layer where the four-dimension measurement framework lives. Time savings capture efficiency. Quality metrics capture whether AI improved the output or just accelerated it. Capacity metrics capture what the team can do now that it couldn't before. And learning metrics capture whether the system is getting better over time, whether each campaign cycle gives the AI more useful context than the last.

The teams that measure only time will never see the compounding. The teams that measure all four dimensions can make the case for continued investment because they can show that AI returns are increasing, not plateauing.

Forbes saved 18,000 hours annually by restructuring their production workflow. That's the time dimension. But the capacity dimension is more revealing: those hours went into new formats, audience development, and revenue-generating content the team previously lacked bandwidth to pursue. Their conversion rates doubled. The ROI case isn't "we saved time." It's "we built capabilities we didn't have."

Citrix went from 5 people creating emails to 80. FTI Consulting achieved a 316x increase in email output capacity. These aren't AI stories in isolation. They're stack stories. The results came from connecting the production layer to the distribution layer to the intelligence layer, with an orchestration layer that fed performance data back through the system.

Why the production layer is the leverage point

The marketing technology landscape now includes over 15,000 solutions, according to Scott Brinker's 2025 landscape count. And yet Gartner's Marketing Technology Survey found that marketers utilize only 33% of their martech stack's capabilities, down from 58% in 2020. More tools, less utilization. The stack framework explains why.

The production layer is the pivot between the structural and adaptive halves of the stack. Below it, data and distribution are essential but increasingly commodity. Every enterprise has a CRM. Every enterprise has a MAP. The tools differ, but the architectural function is the same.

Above the production layer, intelligence and orchestration are where differentiation happens. But they only differentiate when they have structured context to work with. An AI model without brand context is the same AI model every other company is using. An AI model operating within a governed production system that provides brand rules, template performance data, correction history, and audience insights is a competitive advantage that compounds over time.

The production layer is where that context lives. It's where brand rules get encoded as constraints, where template performance accumulates, where the gap between what your team designs and what your audience receives gets closed by a system rather than by heroic effort. Without a dedicated production layer, the intelligence layer operates in a vacuum: fast, capable, and generic.

This is also where 59% of marketing ops teams lack expertise. Not in data infrastructure. Not in their MAP. In the production and governance layer that would give their AI investments something meaningful to work with. The stack framework makes the underinvestment visible: you can see exactly where the missing layer sits and what the layers above it lose without it.

Building your stack from the bottom up

The order matters. The most common mistake is starting at Layer 4, buying an AI tool, and expecting it to solve problems that live in Layers 1 through 3. AI tools are easy to buy and impressive to demo. The structural layers they depend on are harder to build and less exciting to present to leadership. But the structural layers are what determine whether the AI investment produces lasting results or an impressive pilot that never scales.

If your data foundation has gaps, fix those first. If your MAP is handling production work it was never designed for, separate the production layer. If your brand governance depends on individual reviewers catching errors rather than a system enforcing consistency, build the governance infrastructure. Then, when you add the intelligence layer, it has something to work with.

BCG's research shows 60% of companies reporting minimal AI value despite substantial investment. The stack framework suggests why: they invested in the adaptive layers without building the structural layers those tools depend on. The model is fine. The context is missing.

The stacks that produce compounding returns share a common architecture. The data foundation is clean and connected. The distribution layer handles orchestration and delivery. The production layer provides the governed environment where brand context, template systems, and approval workflows create the structured data that AI needs. The intelligence layer operates within that context, getting better with every campaign cycle. And the orchestration layer feeds performance signals back through the system so every layer improves over time.

The question isn't which AI model to use. It's whether your stack gives any model the context it needs to produce work your audience actually recognizes as yours.